Yes, hiding HTTP-referers on your outgoing links are still a thing. Even though standards evolved, they rely on your user having a current browser, which is really something outside of your control. Even then, many larger websites have technologies in place to mask the http-referer on outgoing links. This is to avoid leaking any compromising information to a 3rd-party reliably.

If you do not want to spend time on implementing your own solution, you can fortunately rely on a plethora of de-referer services out there. I myself have been running such a de-referer service: url.rw.

Given that the tech behind running such a service is pretty simple, your possibilities to stand-out from the masses is minimal. For me though I chose:

- Lowest possible response times – no matter which corner in the World you are using the service from

- Highest possible quality, through reliability and zero annoyance (no tracking and no ads)

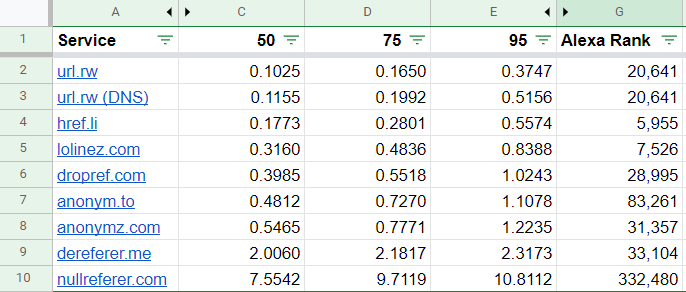

Both have now been achieved with the help of AWS Global Accelerator. Url.rw is now by far the fastest from all corners in the World – even beating Automattic’s href.li:

The results show clearly: measured via whereisitup.com, from over 250 different locations, 95% of all requests are delivered in less than half a second and on average within a tenth’ of a second.

From Google to Cloudflare to AWS!

Initially, I used Google App Engine. It was a very care-free and cost-efficient way to run url.rw. I even did a talk about that at Cape Town’s DevOps Days in 2018. However, there were two downsides:

- Response times were really bad at times due to cold-starts, avoiding those adds significantly to the costs (worthy though for larger apps).

- It is not possible to have multiple App Engine regions run concurrently for the same domain – so I was stuck with a single location.

So I decided to give Cloudflare-workers a shot: it improved latency around the globe greatly. Unfortunately – as with any type of “cloud function”, cold-starts were really bad as well. A lot more unpredictable than Google App Engine 😥:

They did improve a bit, but it was still pretty bad compared to what I could achieve with cheap VMs.

Additionally, even if you are paying for the workers and are on a Pro account, Cloudflare will not serve traffic from all edge locations, especially Australia is hit hard.

Note: this is where AWS pay-by-usage shines over having a flat-rate pricing model such as Cloudflare.

My last option.. AWS. With a bunch of t4g.nano EC2s, and nginx-njs I was able to create a very simplistic system and launch in most of the available AWS regions (over 20!!).

With the help of Route53 latency based routing I was able to route traffic for users to the closest region via DNS. Already now I was mostly faster than other services, but it had downsides as well:

- DNS based routing is relatively inaccurate.

- Using DNS as failover is really not a good option as its highly unreliable.

So I gave AWS Global Accelerator a shot, an “AnyCast”-like service provided by AWS. I am genuinely impressed.

What’s good about AWS Global Accelerator?

It was really easy to understand and setup – especially with Terraform. Latency is really good. I was expecting a higher latency in regions where I have an EC2, but it is usually even lower. Finally having “AnyCast” instead of doing DNS-based failover (across regions) is really unbelievably great.

What’s bad about AWS Global Accelerator?

Currently there is no IPv6 support (now available), so I unfortunately still need to run a DNS-based Geo/Failover just for the AAAA-records. I do hope IPv6 can be added to my existing resource in future without any extra costs.

Less critical, but it would be nice to be able to customize the PTR records, this was recently added to Elastic IPs.

Final words

It is really great to see that it is finally possible to have “AnyCast” with AWS, who have been very DNS based traditionally.

Offering a de-referer service such as url.rw is really fun. It allows me to play around with different technologies while offering a service that is being used at a larger and global scale – by over 80 million every month.